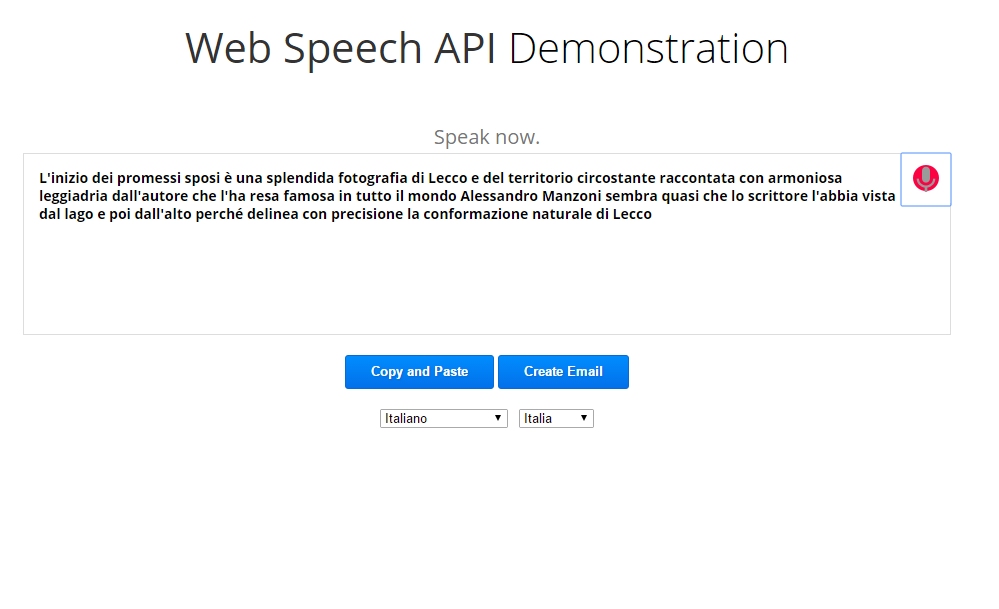

Looking through their audio encoder code, it looks like the audio can be either FLAC or Speex– but it looks like it’s some sort of specially modified version of Speex- I’m not sure what it is, but it just didn’t look quite right. It looks like the audio is collected from the mic, and then passed via an HTTPS POST to a Google web service, which responds with a JSON object with the results. I found the files I was looking for in the chromium source repo: Genius! but how does it work? I started digging around in the Chromium source code, to find out if the speech recognition is implemented as a library built into Chrome, or, if it sends the audio back to Google to process- I know I’ve seen the Sphynx libraries in the Android build, but I was sure the latter was the case- the speech recognition was really good, and that’s really hard to do without really good language models- not something you’d be able to build into a browser. If you’re running Chrome version 11, you can test out the new speech capabilities by going to their simple test page on the site:

This feature has been available for awhile on Android devices, so many of you will already be used to it, and welcome the new feature. Speech Recognition Anywhere expands the capabilities of the Web Speech API in both Chrome and Edge, in order to allow users to control the Internet or to fill out documents and forms. This means that you’ll be able to talk to your computer, and Chrome will be able to interpret it. Just yesterday, Google pushed version 11 of their Chrome browse r into beta, and along with it, one really interesting new feature- support for the HTML5 speech input API. Subsequently, by forging integration channels between your web app and this native iOS counterpart through techniques like deep linking or custom URL schemes, users utilizing iOS devices can seamlessly transition to the native app to access speech recognition features while continuing to engage with your web app for its diverse array of functionalities.I’ve posted an updated version of this article here, using the new full-duplex streaming API. By harnessing Apple's speech recognition APIs, you can develop an application tailor-made for iOS devices. However, it's crucial to bear in mind that adopting this approach may entail additional costs and dependencies.Īlternatively, if the imperative for speech recognition specifically on iOS persists, crafting a native iOS app might hold promise. These services boast the provision of Software Development Kits (SDKs) or Application Programming Interfaces (APIs) that empower seamless incorporation of speech recognition capabilities within your web app. Esteemed services such as Google Cloud Speech-to-Text, IBM Watson Speech to Text, and Microsoft Azure Speech to Text come to the forefront. One viable option involves integrating third-party speech recognition services into your web app, instead of relying solely on the browser's native functionality. Nonetheless, there are alternative approaches that merit consideration, affording possibilities to navigate this challenge: The absence of the WebKit Speech Recognition API (webkitSpeechRecognition) on iOS confines us to a web platform that lacks a straightforward solution. Regrettably, the functionality of the Web Speech API's speech recognition falls short on mobile iOS devices, including Google Chrome for iOS, as a consequence of Apple's restrictions imposed on iOS devices.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed